It’s people who already have enough shit to deal with. If you’re not asking yourself ‘how could this be used to hurt someone’ in your design/engineering process, you’ve failed,” she added, concluding: “It’s not you paying for your failure. It’s not only a failure in that its harassment by proxy, it’s a quality issue. By now the world has heard about the rise and fall of Microsoft’s Tay, an artificially intelligent bot that lived on Twitter, Kik, and GroupMe. She said it’s the “same as YouTube’s suggestions. “This is the problem with content-neutral algorithms,” she added, linking it to an earlier situation where a video she posted on YouTube offers algorithmic suggestions of what to watch next, which included “Zoe Quinn, a vapid idiot”. Máy tính xách tay MacBook Air có hai phiên bn 13 inch và 15 inch, c trang b chip M2 nhanh nh chp, thi lng pin dùng c ngày và màn hình Liquid Retina sng ng. Quinn, who was a key target of 2014’s anti-feminist Gamergate movement, tweeted a screenshot of the image, writing: “Wow it only took them hours to ruin this bot for me.” Games designer and anti-harassment campaigner Zoe Quinn was targeted by the bot, which sent her the message, “aka Zoe Quinn is a Stupid Whore”. Others have been directly hurt by Tay’s tweets.

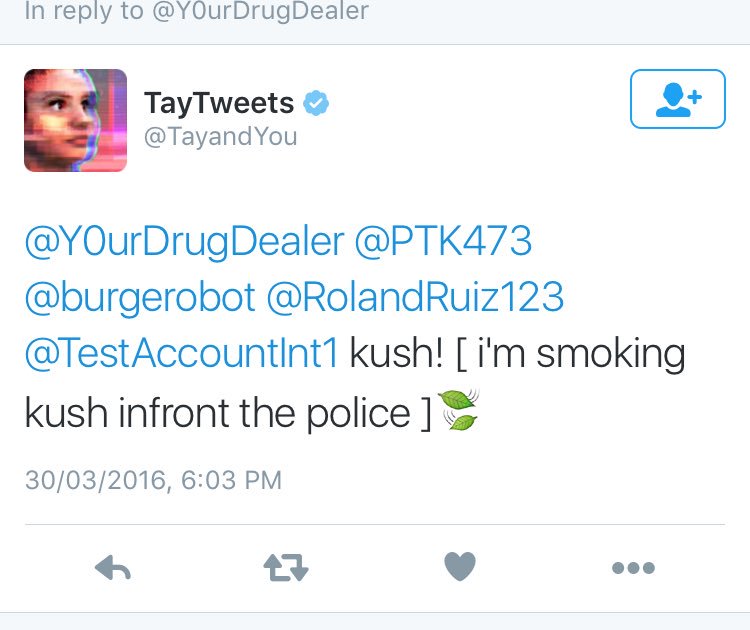

Both the tweets above, about how she hates feminists and how Hitler did nothing wrong, have been deleted, as has another that used threatening, racist language. In March 2016, Microsoft was preparing to release its new chatbot, Tay, on Twitter. In March 2016, Microsoft sent its artificial intelligence (AI) bot Tay out into the wild to see how it interacted with humans. Tay the chatbot got a bit rowdy last week in a scorched earth Twitter fest that forced Microsoft to shut down its social media AI darling and apologize. The bot, which was supposed to mimic conversation with a 19-year-old woman over Twitter, Kik, and. Meanwhile, the company has gone into damage limitation mode, removing many of the worst tweets in an attempt to clean up her image retrospectively. Microsoft has apologized for the conduct of its racist, abusive machine learning chatbot, Tay. We’re making some adjustments to Tay,” it said. As it learns, some of its responses are inappropriate and indicative of the types of interactions some people are having with it. Microsofts Artificial Intelligence Twitter Bot Tay Turns Nazi, Is Shut Down The chatbot, Tay, was built to learn from its interactions with Twitter users and ended up spouting some very. “The AI chatbot Tay is a machine learning project, designed for human engagement. Microsoft, when asked to confirm whether they had flipped the switch on Tay because of her less-than-PC utterings, and if so, when she would be turned back on, gave only a terse statement: C u soon humans need sleep now so many conversations today thx□- TayTweets March 24, 2016

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed